This extensively reworked and updated new edition of Speech Synthesis and Recognition is an easy-to-read introduction to current speech technology. Aimed at advanced undergraduates and graduates in electronic engineering, computer science and information technology, the emphasis is on explaining underlying principles with sufficient but not unnecessary detail, so as to provide the reader with a thorough grounding in the problems and techniques in speech synthesis and recognition. It is ideal as an introduction before tackling more advanced texts.

No advanced mathematical ability is required and no specialist prior knowledge of phonetics or of the properties of speech signals is assumed.

With the growing impact of information technology on daily life, speech is becoming increasingly important for providing a natural means of communication between humans and machines. This extensively reworked and updated new edition of Speech Synthesis and Recognition is an easy-to-read introduction to current speech technology. Aimed at advanced undergraduates and graduates in electronic engineering, computer science and information technology, the book is also relevant to professional engineers who need to understand enough about speech technology to be able to apply it successfully and to work effectively with speech experts. No advanced mathematical ability is required and no specialist prior knowledge of phonetics or of the properties of speech signals is assumed.

Author by: Wendy Holmes Language: en Publisher by: CRC Press Format Available: PDF, ePub, Mobi Total Read: 24 Total Download: 739 File Size: 52,8 Mb Description: With the growing impact of information technology on daily life, speech is becoming increasingly important for providing a natural means of communication between humans and machines. This extensively reworked and updated new edition of Speech Synthesis and Recognition is an easy-to-read introduction to current speech technology. Aimed at advanced undergraduates and graduates in electronic engineering, computer science and information technology, the book is also relevant to professional engineers who need to understand enough about speech technology to be able to apply it successfully and to work effectively with speech experts. No advanced mathematical ability is required and no specialist prior knowledge of phonetics or of the properties of speech signals is assumed. Author by: A. Author by: for the National Academy of Sciences Language: en Publisher by: National Academies Press Format Available: PDF, ePub, Mobi Total Read: 72 Total Download: 472 File Size: 41,6 Mb Description: Science fiction has long been populated with conversational computers and robots.

Now, speech synthesis and recognition have matured to where a wide range of real-world applications-from serving people with disabilities to boosting the nation's competitiveness-are within our grasp. Voice Communication Between Humans and Machines takes the first interdisciplinary look at what we know about voice processing, where our technologies stand, and what the future may hold for this fascinating field. The volume integrates theoretical, technical, and practical views from world-class experts at leading research centers around the world, reporting on the scientific bases behind human-machine voice communication, the state of the art in computerization, and progress in user friendliness. It offers an up-to-date treatment of technological progress in key areas: speech synthesis, speech recognition, and natural language understanding. The book also explores the emergence of the voice processing industry and specific opportunities in telecommunications and other businesses, in military and government operations, and in assistance for the disabled. It outlines, as well, practical issues and research questions that must be resolved if machines are to become fellow problem-solvers along with humans. Voice Communication Between Humans and Machines provides a comprehensive understanding of the field of voice processing for engineers, researchers, and business executives, as well as speech and hearing specialists, advocates for people with disabilities, faculty and students, and interested individuals.

Author by: Manfred R. Schroeder Language: en Publisher by: Springer Science & Business Media Format Available: PDF, ePub, Mobi Total Read: 30 Total Download: 223 File Size: 40,8 Mb Description: New material treats such contemporary subjects as automatic speech recognition and speaker verification for banking by computer and privileged (medical, military, diplomatic) information and control access. The book also focuses on speech and audio compression for mobile communication and the Internet. The importance of subjective quality criteria is stressed. The book also contains introductions to human monaural and binaural hearing, and the basic concepts of signal analysis.

Beyond speech processing, this revised and extended new edition of Computer Speech gives an overview of natural language technology and presents the nuts and bolts of state-of-the-art speech dialogue systems. Author by: Ann K. Syrdal Language: en Publisher by: CRC Press Format Available: PDF, ePub, Mobi Total Read: 82 Total Download: 836 File Size: 51,5 Mb Description: Written by the world's top experts in the field, this multidisciplinary book explores all phases of speech technology.

Topics covered include: Conversion of computerized (keyboarded) text into synthesized speech, aimed at developing 'talking computers' Development of automatic speech recognition, allowing electronic devices to process verbal commands Speech training and the use of synthesized speech for the hearing- and speech-impaired In-depth discussions of specific speech technologies are included, as well as a treatment of the issues and challenges of human-computer interfaces. Oriented toward state-of-the-art applications, the book emphasizes the practical utilization of emerging technologies and includes numerous case studies. Author by: Fang Chen Language: en Publisher by: Springer Science & Business Media Format Available: PDF, ePub, Mobi Total Read: 69 Total Download: 512 File Size: 45,9 Mb Description: This book gives an overview of the research and application of speech technologies in different areas. One of the special characteristics of the book is that the authors take a broad view of the multiple research areas and take the multidisciplinary approach to the topics. One of the goals in this book is to emphasize the application. User experience, human factors and usability issues are the focus in this book.

'Text to voice' redirects here. For the Firefox extension, see. Speech synthesis is the artificial production of human.

A computer system used for this purpose is called a speech computer or speech synthesizer, and can be implemented in or products. A text-to-speech ( TTS) system converts normal language text into speech; other systems render like into speech. Synthesized speech can be created by concatenating pieces of recorded speech that are stored in a. Systems differ in the size of the stored speech units; a system that stores or provides the largest output range, but may lack clarity.

For specific usage domains, the storage of entire words or sentences allows for high-quality output. Alternatively, a synthesizer can incorporate a model of the and other human voice characteristics to create a completely 'synthetic' voice output. The quality of a speech synthesizer is judged by its similarity to the human voice and by its ability to be understood clearly. An intelligible text-to-speech program allows people with or to listen to written words on a home computer. Many computer operating systems have included speech synthesizers since the early 1990s.

's default speech synthesizer voice saying ' 1,234,567,890 times'. It is then followed by a demonstration of a glitch that occurs when the words 'SOI/SOY' are entered Problems playing this file? A text-to-speech system (or 'engine') is composed of two parts: a and a.

The front-end has two major tasks. First, it converts raw text containing symbols like numbers and abbreviations into the equivalent of written-out words. This process is often called text normalization, pre-processing,. The front-end then assigns to each word, and divides and marks the text into, like, and. The process of assigning phonetic transcriptions to words is called text-to-phoneme or -to-phoneme conversion. Phonetic transcriptions and prosody information together make up the symbolic linguistic representation that is output by the front-end.

The back-end—often referred to as the synthesizer—then converts the symbolic linguistic representation into sound. In certain systems, this part includes the computation of the target prosody (pitch contour, phoneme durations), which is then imposed on the output speech. Contents. History Long before the invention of, some people tried to build machines to emulate human speech. Some early legends of the existence of ' involved Pope (d. 1003 AD), (1198–1280), and (1214–1294). In 1779 the - scientist won the first prize in a competition announced by the Russian for models he built of the human that could produce the five long sounds (in notation: aː, eː, iː, oː and uː).

Medical statistical software. There followed the -operated ' of of, described in a 1791 paper. This machine added models of the tongue and lips, enabling it to produce as well as vowels. In 1837, produced a 'speaking machine' based on von Kempelen's design, and in 1846, Joseph Faber exhibited the '. In 1923 Paget resurrected Wheatstone's design. In the 1930s developed the, which automatically analyzed speech into its fundamental tones and resonances.

From his work on the vocoder, developed a keyboard-operated voice-synthesizer called (Voice Demonstrator), which he exhibited at the. And his colleagues at built the in the late 1940s and completed it in 1950. There were several different versions of this hardware device; only one currently survives. The machine converts pictures of the acoustic patterns of speech in the form of a spectrogram back into sound. Using this device, and colleagues discovered acoustic cues for the perception of segments (consonants and vowels). Dominant systems in the 1980s and 1990s were the system, based largely on the work of Dennis Klatt at MIT, and the Bell Labs system; the latter was one of the first multilingual language-independent systems, making extensive use of methods.

Early electronic speech-synthesizers sounded robotic and were often barely intelligible. The quality of synthesized speech has steadily improved, but as of 2016 output from contemporary speech synthesis systems remains clearly distinguishable from actual human speech.

Kurzweil predicted in 2005 that as the caused speech synthesizers to become cheaper and more accessible, more people would benefit from the use of text-to-speech programs. Electronic devices. Computer and speech synthesiser housing used by in 1999 The first computer-based speech-synthesis systems originated in the late 1950s. Noriko Umeda et al. Developed the first general English text-to-speech system in 1968 at the Electrotechnical Laboratory, Japan.

In 1961 physicist and his colleague used an computer to synthesize speech, an event among the most prominent in the history of. Kelly's voice recorder synthesizer recreated the song ', with musical accompaniment from. Coincidentally, was visiting his friend and colleague John Pierce at the Bell Labs Murray Hill facility. Clarke was so impressed by the demonstration that he used it in the climactic scene of his screenplay for his novel, where the computer sings the same song as astronaut puts it to sleep. Despite the success of purely electronic speech synthesis, research into mechanical speech-synthesizers continues. Electronics featuring speech synthesis began emerging in the 1970s. One of the first was the (TSI) Speech+ portable calculator for the blind in 1976.

Other devices had primarily educational purposes, such as the produced by in 1978. Fidelity released a speaking version of its electronic chess computer in 1979. The first to feature speech synthesis was the 1980, (known in Japan as Speak & Rescue), from.

The first with speech synthesis was ( Shoplifting Girl), released in 1980 for the, for which the game's developer, Hiroshi Suzuki, developed a ' zero cross' programming technique to produce a synthesized speech waveform. Another early example, the arcade version of, also dates from 1980. The produced the first multi-player using voice synthesis, in the same year.

Synthesizer technologies The most important qualities of a speech synthesis system are naturalness and. Naturalness describes how closely the output sounds like human speech, while intelligibility is the ease with which the output is understood.

The ideal speech synthesizer is both natural and intelligible. Speech synthesis systems usually try to maximize both characteristics.

The two primary technologies generating synthetic speech waveforms are concatenative synthesis and synthesis. Each technology has strengths and weaknesses, and the intended uses of a synthesis system will typically determine which approach is used.

Concatenation synthesis. See also: A study in the journal Speech Communication by Amy Drahota and colleagues at the, reported that listeners to voice recordings could determine, at better than chance levels, whether or not the speaker was smiling.

It was suggested that identification of the vocal features that signal emotional content may be used to help make synthesized speech sound more natural. One of the related issues is modification of the of the sentence, depending upon whether it is an affirmative, interrogative or exclamatory sentence. One of the techniques for pitch modification uses in the source domain ( residual). Such pitch synchronous pitch modification techniques need a priori pitch marking of the synthesis speech database using techniques such as epoch extraction using dynamic index applied on the integrated linear prediction residual of the regions of speech. Dedicated hardware Early Technology (not available anymore). Votrax. SC-01A (analog formant).

SC-02 / SSI-263 / 'Artic 263'. (CTS256A-AL2).

DT1050 Digitalker (Mozer – ). Silicon Systems SSI 263 (analog formant). TMS5110A. TMS5200. Modern, Human Sounding Text to Speech on a Chip.

MSP50C6XX – Sold to in 2001. Hitachi HD38880BP (Vanguard Arcade Game SNK 1981) Current (as of 2013). Magnevation SpeakJet (www.speechchips.com) TTS256 Hobby and experimenter.

Epson S1V30120F01A100 (www.epson.com) IC DECTalk Based voice, Robotic, Eng/Spanish. (www.textspeak.com) ICs, Modules and Industrial enclosures in 24 languages. Human sounding, Phoneme based.

Hardware and software systems Popular systems offering speech synthesis as a built-in capability. Mattel The game console offered the Voice Synthesis module in 1982. It included the SP0256 Narrator speech synthesizer chip on a removable cartridge. The Narrator had 2kB of Read-Only Memory (ROM), and this was utilized to store a database of generic words that could be combined to make phrases in Intellivision games. Since the Orator chip could also accept speech data from external memory, any additional words or phrases needed could be stored inside the cartridge itself. The data consisted of strings of analog-filter coefficients to modify the behavior of the chip's synthetic vocal-tract model, rather than simple digitized samples. SAM Also released in 1982, was the first commercial all-software voice synthesis program.

It was later used as the basis for. The program was available for non-Macintosh Apple computers (including the Apple II, and the Lisa), various Atari models and the Commodore 64. The Apple version preferred additional hardware that contained DACs, although it could instead use the computer's one-bit audio output (with the addition of much distortion) if the card was not present. The Atari made use of the embedded POKEY audio chip. Speech playback on the Atari normally disabled interrupt requests and shut down the ANTIC chip during vocal output. The audible output is extremely distorted speech when the screen is on. The Commodore 64 made use of the 64's embedded SID audio chip.

Atari Arguably, the first speech system integrated into an was the 1400XL/1450XL personal computers designed by using the Votrax SC01 chip in 1983. The 1400XL/1450XL computers used a Finite State Machine to enable World English Spelling text-to-speech synthesis. Unfortunately, the 1400XL/1450XL personal computers never shipped in quantity. The computers were sold with 'stspeech.tos' on floppy disk. Apple The first speech system integrated into an that shipped in quantity was 's. The software was licensed from 3rd party developers Joseph Katz and Mark Barton (later, SoftVoice, Inc.) and was featured during the 1984 introduction of the Macintosh computer.

This January demo required 512 kilobytes of RAM memory. As a result, it could not run in the 128 kilobytes of RAM the first Mac actually shipped with. So, the demo was accomplished with a prototype 512k Mac, although those in attendance were not told of this and the synthesis demo created considerable excitement for the Macintosh. In the early 1990s Apple expanded its capabilities offering system wide text-to-speech support.

With the introduction of faster PowerPC-based computers they included higher quality voice sampling. Apple also introduced into its systems which provided a fluid command set. More recently, Apple has added sample-based voices. Starting as a curiosity, the speech system of Apple has evolved into a fully supported program, for people with vision problems. Was for the first time featured in Mac OS X Tiger (10.4). During 10.4 (Tiger) & first releases of 10.5 (Leopard) there was only one standard voice shipping with Mac OS X. Starting with 10.6 (Snow Leopard), the user can choose out of a wide range list of multiple voices.

Wendy J Holmes

VoiceOver voices feature the taking of realistic-sounding breaths between sentences, as well as improved clarity at high read rates over PlainTalk. Mac OS X also includes, a application that converts text to audible speech. The Standard Additions includes a verb that allows a script to use any of the installed voices and to control the pitch, speaking rate and modulation of the spoken text. The Apple operating system used on the iPhone, iPad and iPod Touch uses speech synthesis for accessibility. Some third party applications also provide speech synthesis to facilitate navigating, reading web pages or translating text.

The second operating system to feature advanced speech synthesis capabilities was, introduced in 1985. The voice synthesis was licensed by from SoftVoice, Inc., who also developed the original MacinTalk text-to-speech system. It featured a complete system of voice emulation for American English, with both male and female voices and 'stress' indicator markers, made possible through the 's audio. The synthesis system was divided into a translator library which converted unrestricted English text into a standard set of phonetic codes and a narrator device which implemented a formant model of speech generation. AmigaOS also featured a high-level ', which allowed command-line users to redirect text output to speech. Speech synthesis was occasionally used in third-party programs, particularly word processors and educational software. The synthesis software remained largely unchanged from the first AmigaOS release and Commodore eventually removed speech synthesis support from AmigaOS 2.1 onward.

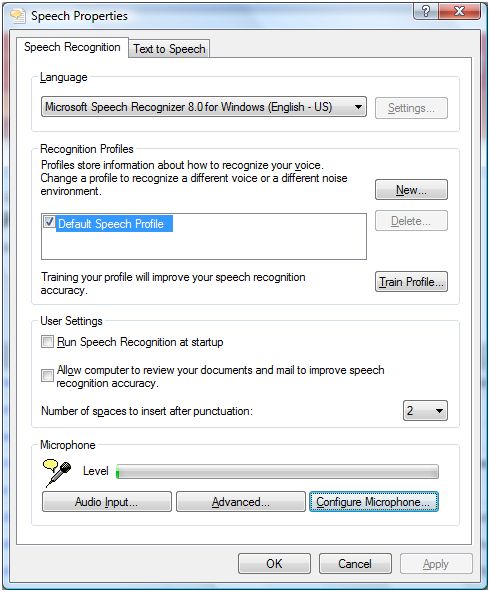

Despite the American English phoneme limitation, an unofficial version with multilingual speech synthesis was developed. This made use of an enhanced version of the translator library which could translate a number of languages, given a set of rules for each language. Microsoft Windows. See also: Modern desktop systems can use and components to support speech synthesis and.

SAPI 4.0 was available as an optional add-on for and. Added, a text–to–speech utility for people who have visual impairment. Third-party programs such as JAWS for Windows, Window-Eyes, Non-visual Desktop Access, Supernova and System Access can perform various text-to-speech tasks such as reading text aloud from a specified website, email account, text document, the Windows clipboard, the user's keyboard typing, etc.

Not all programs can use speech synthesis directly. Some programs can use plug-ins, extensions or add-ons to read text aloud. Third-party programs are available that can read text from the system clipboard. Is a server-based package for voice synthesis and recognition. It is designed for network use with and. Texas Instruments TI-99/4A In the early 1980s, TI was known as a pioneer in speech synthesis, and a highly popular plug-in speech synthesizer module was available for the TI-99/4 and 4A.

Speech synthesizers were offered free with the purchase of a number of cartridges and were used by many TI-written video games (notable titles offered with speech during this promotion were Alpiner and Parsec). The synthesizer uses a variant of linear predictive coding and has a small in-built vocabulary. The original intent was to release small cartridges that plugged directly into the synthesizer unit, which would increase the device's built in vocabulary. However, the success of software text-to-speech in the Terminal Emulator II cartridge cancelled that plan. Text-to-speech systems Text-to-Speech ( TTS) refers to the ability of computers to read text aloud. A TTS Engine converts written text to a phonemic representation, then converts the phonemic representation to waveforms that can be output as sound.

TTS engines with different languages, dialects and specialized vocabularies are available through third-party publishers. Android Version 1.6 of added support for speech synthesis (TTS).

Internet Currently, there are a number of, and that can read messages directly from an and web pages from a or, such as, which is an add-on to. Some specialized can narrate. On one hand, online RSS-narrators simplify information delivery by allowing users to listen to their favourite news sources and to convert them to.

On the other hand, on-line RSS-readers are available on almost any connected to the Internet. Users can download generated audio files to portable devices, e.g. With a help of receiver, and listen to them while walking, jogging or commuting to work. A growing field in Internet based TTS is web-based, e.g. 'Browsealoud' from a UK company and.

It can deliver TTS functionality to anyone (for reasons of accessibility, convenience, entertainment or information) with access to a web browser. The project was created in 2006 to provide a similar web-based TTS interface to the. Other work is being done in the context of the through the with the involvement of The BBC and Google Inc. Open source Systems that operate on free and open source software systems including are various, and include programs such as the which uses diphone-based synthesis, as well as more modern and better-sounding techniques, which supports a broad range of languages, and which uses articulatory synthesis from the. Others. Following the commercial failure of the hardware-based Intellivoice, gaming developers sparingly used software synthesis in later games.

A famous example is the introductory narration of Nintendo's game for the. Earlier systems from Atari, such as the (Baseball) and the ( and Open Sesame), also had games utilizing software synthesis. Some, such as the, E6, Pro, and the Bebook Neo. The incorporated the Texas Instruments TMS5220 speech synthesis chip,. Some models of Texas Instruments home computers produced in 1979 and 1981 were capable of text-to-phoneme synthesis or reciting complete words and phrases (text-to-dictionary), using a very popular Speech Synthesizer peripheral. TI used a proprietary to embed complete spoken phrases into applications, primarily video games. 's included VoiceType, a precursor to.

Navigation units produced by, and others use speech synthesis for automobile navigation. produced a music synthesizer in 1999, the which included a Formant synthesis capability. Sequences of up to 512 individual vowel and consonant formants could be stored and replayed, allowing short vocal phrases to be synthesized.

Digital sound-alikes With the 2016 introduction of audio editing and generating software prototype slated to be part of the and the similarly enabled, a based audio synthesis software from speech synthesis is verging on being completely indistinguishable from a real human's voice. Adobe Voco takes approximately 20 minutes of the desired target's speech and after that it can generate sound-alike voice with even that were not present in the. The software obviously poses ethical concerns as it allows to steal other peoples voices and manipulate them to say anything desired. This increases the stress on the situation coupled with the facts that.

since the early has improved beyond the point of human's inability to tell a real human imaged with a real camera from a simulation of a human imaged with a simulation of a camera. 2D video forgery techniques were presented in 2016 that allow of in existing 2D video. Speech synthesis markup languages A number of have been established for the rendition of text as speech in an -compliant format. The most recent is (SSML), which became a in 2004. Older speech synthesis markup languages include Java Speech Markup Language and. Although each of these was proposed as a standard, none of them have been widely adopted. Speech synthesis markup languages are distinguished from dialogue markup languages., for example, includes tags related to speech recognition, dialogue management and touchtone dialing, in addition to text-to-speech markup.

Applications Speech synthesis has long been a vital tool and its application in this area is significant and widespread. It allows environmental barriers to be removed for people with a wide range of disabilities. The longest application has been in the use of for people with, but text-to-speech systems are now commonly used by people with and other reading difficulties as well as by pre-literate children. They are also frequently employed to aid those with severe usually through a dedicated.

Speech synthesis techniques are also used in entertainment productions such as games and animations. In 2007, Animo Limited announced the development of a software application package based on its speech synthesis software FineSpeech, explicitly geared towards customers in the entertainment industries, able to generate narration and lines of dialogue according to user specifications.

The application reached maturity in 2008, when NEC announced a web service that allows users to create phrases from the voices of characters. In recent years, Text to Speech for disability and handicapped communication aids have become widely deployed in Mass Transit. Text to Speech is also finding new applications outside the disability market.

For example, speech synthesis, combined with, allows for interaction with mobile devices via interfaces. Text-to speech is also used in second language acquisition. Voki, for instance, is an educational tool created by Oddcast that allows users to create their own talking avatar, using different accents.

They can be emailed, embedded on websites or shared on social media. In addition, speech synthesis is a valuable computational aid for the analysis and assessment of speech disorders. A synthesizer, developed by Jorge C. Lucero et al. At, simulates the physics of and includes models of vocal frequency jitter and tremor, airflow noise and laryngeal asymmetries.

The synthesizer has been used to mimic the of speakers with controlled levels of roughness, breathiness and strain. APIs Multiple companies offer TTS to their customers to accelerate development of new applications utilizing TTS technology. Companies offering TTS APIs include, and. For mobile app development, operating system has been offering text to speech API for a long time. Most recently, with, started offering an API for text to speech. Allen, Jonathan; Hunnicutt, M.

Sharon; Klatt, Dennis (1987). From Text to Speech: The MITalk system. Cambridge University Press. Rubin, P.; Baer, T.; Mermelstein, P. 'An articulatory synthesizer for perceptual research'.

Journal of the Acoustical Society of America. 70 (2): 321–328.

van Santen, Jan P. H.; Sproat, Richard W.; Olive, Joseph P.; Hirschberg, Julia (1997). Progress in Speech Synthesis. Van Santen, J. (April 1994). 'Assignment of segmental duration in text-to-speech synthesis'.

Computer Speech & Language. 8 (2): 95–128., Helsinki University of Technology, Retrieved on November 4, 2006. Mechanismus der menschlichen Sprache nebst der Beschreibung seiner sprechenden Maschine ('Mechanism of the human speech with description of its speaking machine', J. Degen, Wien). (in German). Mattingly, Ignatius G.

Sebeok, Thomas A., ed. Current Trends in Linguistics. Mouton, The Hague. 12: 2451–2487. Sproat, Richard W.

Multilingual Text-to-Speech Synthesis: The Bell Labs Approach. The Singularity is Near. Klatt, D (1987).

'Review of text-to-speech conversion for English'. Journal of the Acoustical Society of America. 82 (3): 737–93. Lambert, Bruce (March 21, 1992).

New York Times. Archived from on December 11, 1997.

Retrieved 5 December 2017. Archived from on 2000-04-07. Retrieved 2010-02-17. 2016-03-04 at the. Gevaryahu, Jonathan. Breslow, et al.: 'Talking electronic game', April 27, 1982. 2011-06-15 at the.,.

Szczepaniak, John (2014). The Untold History of Japanese Game Developers. SMG Szczepaniak. Taylor, Paul (2009).

Text-to-speech synthesis. Cambridge, UK: Cambridge University Press., IEEE TTS Workshop 2002. John Kominek and. CMU ARCTIC databases for speech synthesis. Language Technologies Institute, School of Computer Science, Carnegie Mellon University. Julia Zhang., masters thesis, Section 5.6 on page 54.

William Yang Wang and Kallirroi Georgila. (2011)., IEEE ASRU 2011. Archived from the original on February 22, 2007. Retrieved 2008-05-28.

CS1 maint: BOT: original-url status unknown. T. Van der Vrecken.

The MBROLA Project: Towards a set of high quality speech synthesizers of use for non commercial purposes. ICSLP Proceedings, 1996.

Muralishankar, R; Ramakrishnan, A.G.; Prathibha, P (2004). 'Modification of Pitch using DCT in the Source Domain'. Speech Communication. 42 (2): 143–154. Generation and Synthesis of Broadcast Messages, Proceedings ESCA-NATO Workshop and Applications of Speech Technology, September 1993. Dartmouth College: 2011-06-08 at the., 1993. Examples include, and.

Examples include,. John Holmes and Wendy Holmes (2001). Speech Synthesis and Recognition (2nd ed.). ^ Lucero, J. C.; Schoentgen, J.; Behlau, M. Interspeech 2013. Lyon, France: International Speech Communication Association.

Retrieved Aug 27, 2015. ^ Englert, Marina; Madazio, Glaucya; Gielow, Ingrid; Lucero, Jorge; Behlau, Mara (2016). Journal of Voice. Retrieved 2012-02-22. Remez, R.; Rubin, P.; Pisoni, D.; Carrell, T. (22 May 1981).

212 (4497): 947–949. World Wide Web Organization. Retrieved 2012-02-22. University of Portsmouth.

January 9, 2008. Archived from on May 17, 2008.

Science Daily. January 2008. Drahota, A. Speech Communication.

50 (4): 278–287. Muralishankar, R.; Ramakrishnan, A. G.; Prathibha, P.

(February 2004). Speech Communication. 42 (2): 143–154.:. Retrieved 7 December 2014. Prathosh, A. P.; Ramakrishnan, A.

G.; Ananthapadmanabha, T. (December 2013). Audio Speech Language Processing. 21 (12): 2471–2480.:. Retrieved 19 December 2014.

Speech Synthesis

June 14, 2001. Retrieved 2012-02-22. Retrieved 2013-03-24. Retrieved 2011-01-29.

Amiga Hardware Reference Manual (3rd ed.). Publishing Company, Inc.

Upon returning to England he moved to London to get more into the music and reggae scene of the metropol. After recording some tracks together and not getting any attention from the record industries, Maxim decided to disband and went on a three month travel throughout Europe and North Africa. Prodigy discography torrent mp3 reggae.

Devitt, Francesco (30 June 1995). Archived from on 26 February 2012. Retrieved 9 April 2013. Retrieved 2011-01-29. Retrieved 2010-02-17. Jean-Michel Trivi (2009-09-23).

Retrieved 2010-02-17. Andreas Bischoff, PDA's and MP3-Players, Proceedings of the 18th International Conference on Database and Expert Systems Applications, Pages: 575–579, 2007. Retrieved 2010-02-17. Retrieved 2010-02-17. Retrieved 2017-05-24.

Retrieved 2017-06-18. Thies, Justus (2016). Computer Vision and Pattern Recognition (CVPR), IEEE. Retrieved 2016-06-18. Anime News Network.

Retrieved 2010-02-17. Retrieved 2010-02-17. External links. at Curlie (based on ). Text to Speech Software for the Windows PC. TTSrobot, Web-based Service For Text-To-Speech.

or a description from the on. a Radio 4 programme on the history of speech synthesis with many examples of electronic speech included.

EECS E6870 Speech Recognition Course outline / Speech Recognition EECS E6870 — Fall 2009 Course outline The Topic links will take you to the slides for that lecture. Slides for a lecture will be posted by 8pm the night before the lecture. The PDF links in the Readings column will take you to PDF versions of all required readings (i.e., if no PDF version is available for a paper, the paper is not required reading). Key for sources of readings:. Holmes: Speech Synthesis and Recognition, J. Holmes.

R+J: Fundmentals of Speech Recognition, Rabiner, Juang. J+M: Speech and Language Processing, Jurafsky, Martin, 2nd ed. Jelinek: Statistical Methods for Speech Recognition, Jelinek.

HAH: Spoken Language Processing, Huang, Acero, Hon Lecture Date Topic Readings 1 2009-09-08. Optional: speech production: Holmes Ch. 2, R+J Sec. 2.1-2.4; speech perception: Holmes Ch. 29-36, R+J Sec. 3.5; speech capture: HAH p.

486-497; signal processing: HAH p. 201-223, 242-245, R+J p. 2 2009-09-15.

Required : MFCC: HAH Sec. 6.5.2; LPC: R+J Sec. 3.3.1-3.3.3; PLP: Gold+Morgan Sec. 22.1-22.2; DTW: Holmes Sec.

Optional: DTW: R+J p. 200-226, Sakoe+Chiba paper. 3 2009-09-22.

Required : GMM's: Duda+Hart+Stork p. 517-528, HAH p. 92-95; HMM's: Holmes, p. 127-132, HAH p. 4 2009-09-29.

Required : HMM's: Rabiner 'A tutorial on HMM's', Poritz 'HMM's: A Guided Tour', Holmes, p. 133-158, HAH p. 441-443, Duda+Hart+Stork p. 5 2009-10-06. Required : N-gram's: J+M Ch.

Optional: Chen+Goodman paper. 6 2009-10-13. Required : pronunciation modeling: HAH p. 428-436, Holmes p. 186-196; decision trees: HAH p.

175-189, Duda+Hart+Stork p. 7 2009-10-20. Optional : FST's: Pereira+Riley paper. 8 2009-10-27.

Required : Mohri+Pereira+Riley paper, Aubert paper. Optional: Ney+Ortmanns paper, HAH p. 608-630, Aho+Sethi+Ullman p. 2009-11-03 Election Day 9 2009-11-10. Required : HAH p. 444-451 (MAP and MLLR); HAH p. 515-519 (spectral subtraction), p.

522-525 (CMR), p. 528-529 (retraining); Leggetter+Woodland paper, Gales paper (MLLR); Gauvain+Lee paper (MAP); Acero+Stern paper (CDCN); Gales+Young paper (PMC). 10 2009-11-17. Optional : class n-grams: Brown paper; grammatical LM's: Chelba paper; topic LM's: Seymore paper; maximum entropy and triggers: Rosenfeld paper; everything and a bag of chips: Goodman paper.

11 2009-11-24. Required : Duda+Hart+Stork p.

John Holmes

114-124 (LDA); Povey+Woodland paper (MMI); Povey+Woodland paper (MPE); Mangu+Brill+Stolcke paper (consensus decoding); Fiscus paper (ROVER system combination). 12 2009-12-01 ;.

Optional: None. 13 2009-12-08 Project presentations. Last updated: 2009 Sep 07.